Why We Built QFlow: Deno, QTI 3.0, and the Case for a New Authoring System

QTI 3.0 is mature enough, the open-source runner ecosystem is real, and institutions need better authoring tools that treat interoperability as architecture. Here is why we built QFlow on Deno and Fresh.

For a long time, assessment authoring has lived in an awkward place.

The LMS or testing platform usually became the place where questions were created, stored, previewed, and eventually locked away. Standards existed, but they were often treated as export features or procurement checkboxes rather than the actual shape of the product.

That approach works right up until institutions need content to move.

Different LMSes. Different delivery systems. Imported banks from older tools. Accessibility requirements that cannot be shrugged off. AI-assisted authoring that can generate plenty of text but still leaves the hard part unsolved: producing assessment content that is structured, valid, reviewable, accessible, and portable.

That is the context behind QFlow. We are building it as a QTI-first authoring system, not as a generic quiz builder that happens to emit XML at the end. You can see the project at QFlowLearn.com.

Why QTI 3.0 feels different now

What many people still call IMS QTI 3.0 is now maintained under 1EdTech, and it feels more buildable than older memories of QTI might suggest.

Part of that is the standard itself. The current QTI overview is public. The implementation guide is public. The procurement language is public. That matters because standards become much more useful once institutions can actually evaluate, request, and verify them in concrete terms.

The accessibility story is stronger now too. QTI 3 is not only about serializing question types. It has a more serious model for preserving support content and interoperable delivery behavior instead of assuming accessibility will be repaired somewhere downstream.

The other big shift is ecosystem proof. There is already a credible open-source runtime story in the AMP Up QTI 3 Player/TestRunner, with a public demo at qti.amp-up.io. That changes the calculus. Once there is real delivery-side infrastructure, a new authoring system stops looking premature and starts looking overdue.

Why this is the right time for a new authoring system

The timing is not only about the standard. It is also about the state of the product landscape around it.

Many older authoring environments still carry the assumptions of an earlier web: server-heavy workflows, platform-bound storage, weak import stories, fragile portability, and a general expectation that content will stay where it was born. That increasingly does not match institutional reality.

Institutions inherit item banks from older systems. They merge platforms. They need content to survive migration. They want better accessibility. And now they are starting to use AI-assisted authoring, which makes the standards question more important, not less. It is easier than ever to generate questions. It is still hard to generate assessment content that can survive outside one vendor boundary.

That is the bar we care about. Not just faster authoring. Better authoring with a longer half-life.

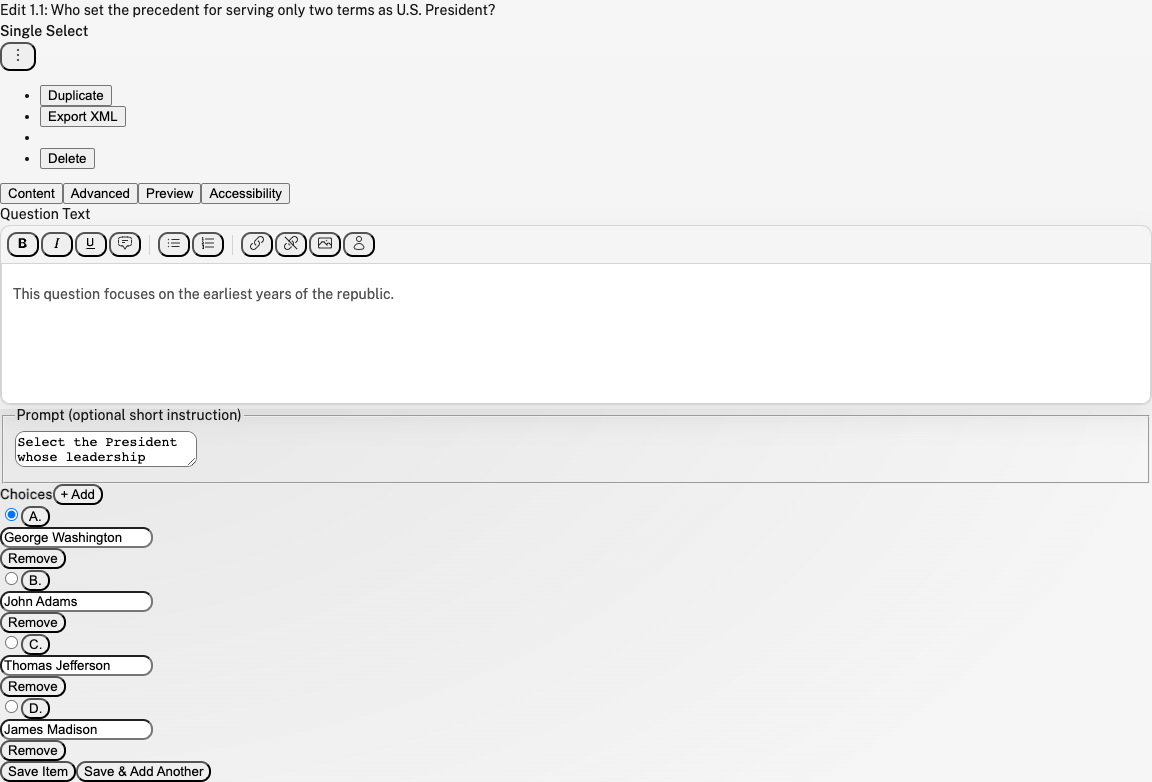

In QFlow today, that means the system can import existing QTI 3.0, QTI 2.1/2.2, and QTI 1.2/1.2.1 content; keep question revisions in Postgres; validate output against vendored QTI 3 XSDs locally; and export standards-based assessment content through the same product paths used for day-to-day work. The point is to meet institutions where their content actually is instead of pretending everything starts greenfield.

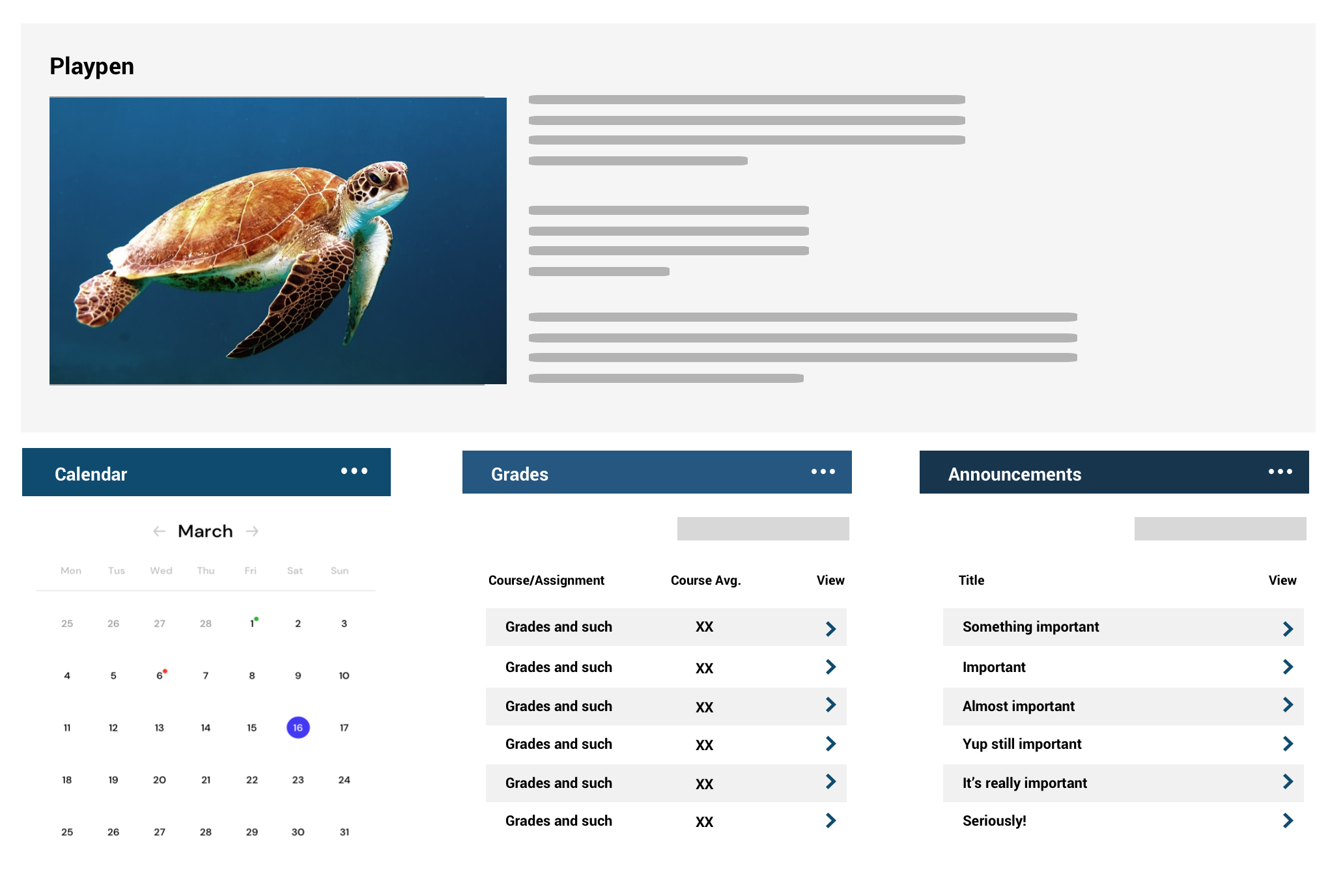

A QFlow demo assessment editing flow: structured question content, preview, accessibility, and export-minded authoring in the same workspace.

Why we chose Deno

We also wanted the stack to reflect the product philosophy.

A standards-heavy product can drown in incidental complexity if the application architecture is fragmented. We did not want one runtime for the frontend, another for the backend, and a permanent translation problem between the authoring UI and the import, validation, and export services behind it.

Deno was appealing for the opposite reason: it keeps the stack coherent.

- TypeScript is first-class from the start instead of being layered on later.

- The tooling is built in: formatting, linting, task running, testing, and runtime config all live in one place.

- The permission model is a good cultural fit for a system that handles authored content, uploads, and integrations carefully.

- The development loop is fast without a lot of framework glue and build-system ceremony.

Fresh made the choice even better. QFlow can stay mostly server-rendered, then hydrate only the places that really need client-side behavior. Rich editing and interaction builders should be interactive. The entire application shell does not need to pay a single-page-app tax just to support them.

In practice, the stack looks like this:

- Deno 2.5+, Fresh 2.x, and Vite for a TypeScript-first application shell

- Preact islands and signals for focused client-side interactivity

- ProseKit for the rich-text editing layer

- Postgres plus Drizzle as the canonical authoring store

- Cloudflare R2-backed upload handling for portable asset references

- Local

xmllintvalidation against vendored QTI 3 XSDs

That combination lets us keep one language, one deployment story, and one mental model across authoring UI, import logic, validation, storage, and export routes. For this kind of product, that simplicity is not aesthetic. It is operational.

What we are actually building

QFlow is not meant to be a toy demo of one interaction type. The current system already handles single-select, multi-select, ordering, matching, and text-entry items; keeps versioned question revisions; rewrites imported assets into portable upload references; and builds QTI 3 assessment output through the same code paths the product uses day to day.

That is another reason we liked Deno and Fresh. The route handlers under routes/ really are the backend. The import flow, export flow, and authoring UI live close together. There is less overhead between the data model and how the product actually behaves.

We are not trying to build a standards slide deck. We are trying to build an authoring system people can actually use.

Closing thought

The short version is this: QTI 3.0 is mature enough, the open-source ecosystem around it is real enough, and institutions need better authoring tools than the ones they inherited.

If the standard is going to matter, it has to matter in the product itself: in import, in authoring, in validation, in preview, in export, and in the day-to-day ergonomics of the tool. That is why we built QFlow the way we did, and why Deno felt like the right foundation for it.

References

- QFlowLearn: https://qflowlearn.com/

- 1EdTech QTI overview: https://www.1edtech.org/standards/qti

- QTI v3 Best Practices and Implementation Guide: https://www.imsglobal.org/spec/qti/v3p0/impl

- Suggested QTI requirements for procurement and RFPs: https://www.1edtech.org/standards/qti/rfp-procurement-agreements

- AMP Up QTI 3 Player/TestRunner: https://github.com/amp-up-io/qti3-item-player

- AMP Up live demo: https://qti.amp-up.io

- Deno: https://deno.com/

- Fresh: https://fresh.deno.dev/